Communication Is Key

No, this article is not about relationship advice for couples. 😉

But if you’ve ever heard a relationship counselor say that most tensions come from unspoken expectations and untested assumptions — keep that thought. It’s about to be surprisingly relevant.

This article is about how most of us are “using AI wrong”. Not because we lack skill or because the models aren’t good enough, but because we’ve been trained to think in terms of prompts when we should be thinking in terms of conversations.

I built a conversation protocol that fixes this — a reusable prompt you can paste into any AI to switch it from “execute immediately” mode to “let’s think together first” mode. It’s free, it works with Claude, ChatGPT, Gemini, or any other model, and I’m sharing it at the end of this article.

If you just want the tool, skip ahead. If you want to understand why it works and why prompt engineering alone keeps failing you — read on.

See for Yourself

Before we get into the what, why, and how — take 30 seconds to compare these two real-world examples. Same task, same AI, same person. The only difference is the approach.

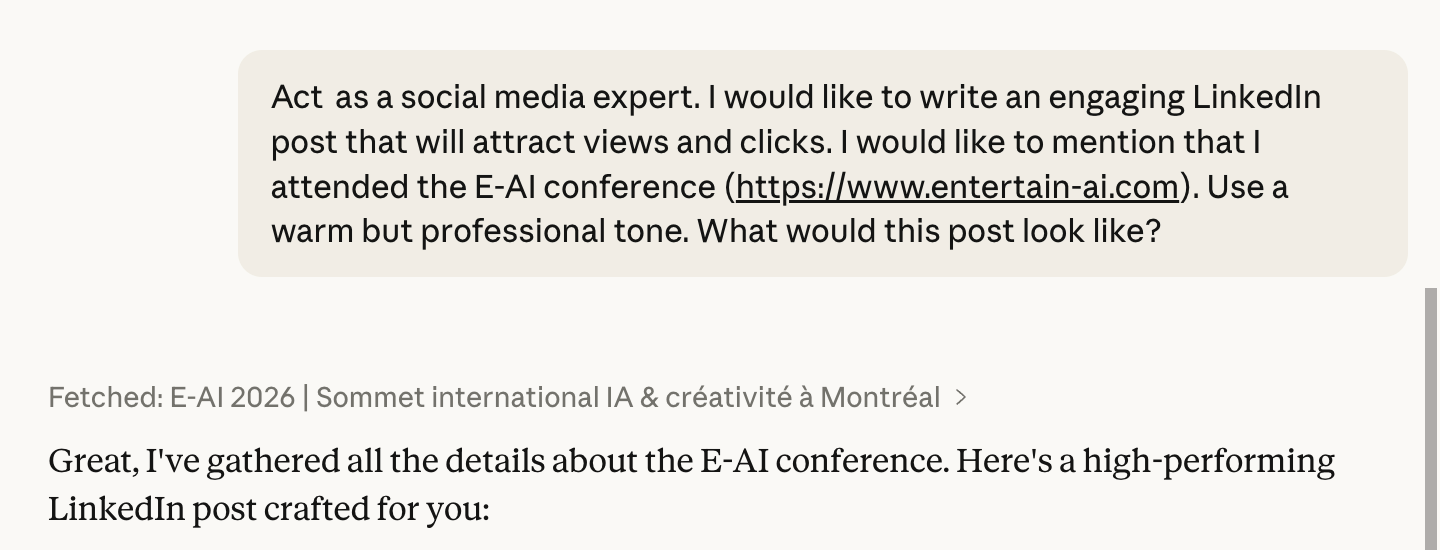

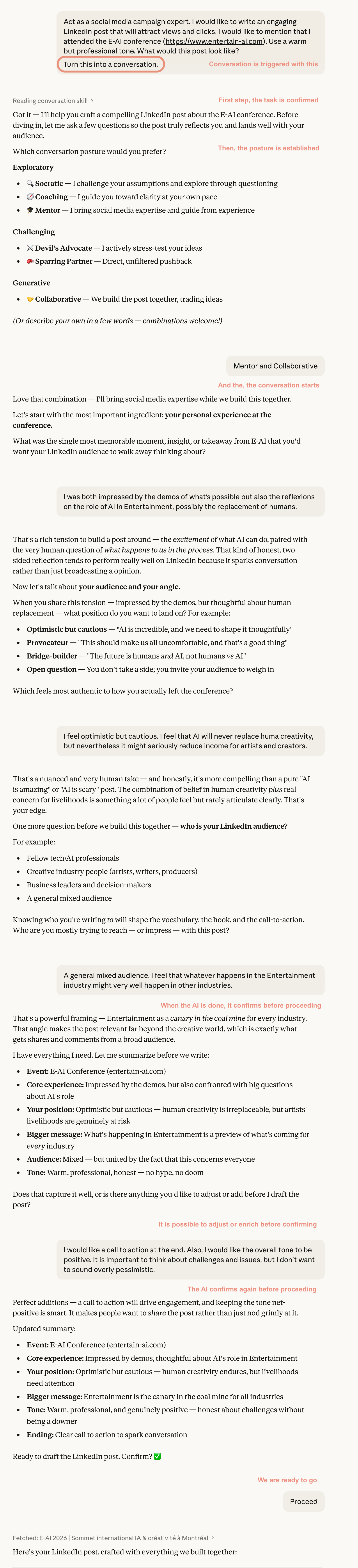

Prompt-only: I asked an AI to write a LinkedIn post about a conference I (fictitiously) attended. The AI immediately fetched the conference website and produced a post. Done in one shot.

Conversation first: Same request, but this time I asked the AI to have a conversation with me before writing. It asked about my audience, my angle, what moment stood out, what tone I wanted, what I wanted readers to do after reading. By the time it wrote the post, it had context I wouldn’t necessarily have thought to include in a prompt.

You can probably guess the quality and relevance of both outputs. Context is what determines the quality of the result.

The Prompt Engineering Trap

Prompt engineering has become the default model for working with AI. The idea is straightforward: if you craft the right instruction — precise enough, structured enough, with enough context and constraints — the AI will produce what you need. And it works well enough. Sometimes remarkably well.

But it has a fundamental flaw that most of us bump into without ever naming it: it puts the entire burden of clarity on us.

We’re expected to anticipate what the AI needs to know. We’re expected to specify the format, the tone, the constraints, the edge cases. We’re expected to front-load every relevant detail into a single, perfectly crafted instruction. And when the result isn’t quite right, the diagnosis is always the same: our prompt wasn’t good enough. “Try again. Be more specific. Add more context. Iterate.”

The problem is, we don’t always know what we don’t know.

A 2025 study from Wharton confirmed what many practitioners sense intuitively — prompt engineering is “complicated and contingent.” Techniques that improve results in one context can actually harm performance in another. There is no universal formula.

So maybe the quest for the perfect prompt is a fool’s errand.

Where the Failures Actually Come From

When AI produces a disappointing result, the instinct is to blame the model or the prompt. But the evidence points somewhere else entirely: most prompt failures come from ambiguity, not from model limitations.

The AI isn’t failing to understand you. It’s filling in the blanks you left — silently, confidently, and in ways you never intended.

When you write a one-shot prompt, you make decisions about what to include and what to leave out. Everything you leave out becomes a gap the AI fills with its own assumptions. And the real issue is that you never see those assumptions. The output looks complete and polished, so you accept it — unaware that the AI quietly decided your audience, your tone, your scope, or your priorities for you.

In a relationship, we’d call that “not communicating.” The result is the same: both parties think they’re aligned, and neither realizes they’re not — until the damage is done.

From Prompts to Conversations

In May 2024, researchers Tea Kraljic and Shachar Lahav from Google published a paper in ACM Interactions titled “From Prompt Engineering to Collaborating.” Their argument: the future of human-AI interaction isn’t about writing better instructions. It’s about shifting from a command-based model — where the human specifies and the AI executes — to a collaborative model where both parties share the effort of shaping intent.

That matches something I’ve observed myself. My best results from AI never came from a single, brilliant prompt. They came from talking it through first — exploring the problem, surfacing my own assumptions, letting the AI ask me questions before it started working.

So I formalized the approach. I built a structured conversation protocol — a set of steps that forces both the human and the AI to explore context before any task execution begins.

The first time I used it on a real project, the difference was obvious. Not a marginal improvement — a fundamentally better output, because the AI finally understood what I actually needed rather than what I’d managed to type into a text box.

The Approach: Context Before Execution

The core idea is simple: separate the thinking from the doing.

In a traditional prompting workflow, you write an instruction and the AI immediately acts on it. Thinking and doing happen simultaneously — and because the AI is eager to be helpful, it fills every gap with assumptions rather than questions. Without telling you.

In a conversational approach, you explicitly create a phase of exploration before execution. The AI doesn’t produce any output yet. Instead, it asks questions — one at a time, building on your answers — until both of you have a shared understanding of what needs to happen. Only then does execution begin. And the result is anchored in a context that neither of you could have built alone.

Think of it like a couple planning a vacation. If you discuss every detail before booking — budget, destinations, dates, what kind of experience you want — the booking itself is quick and accurate. But if you start booking immediately and try to fix things turn by turn (“actually, change the hotel,” “wait, different dates,” “can we add a stop?”), every change risks breaking something else. The conversational approach frontloads the exploration, so the first execution is already dramatically better.

How It Works in Practice

I built this as a reusable protocol — a block of text you paste before any task, in any AI. It has six steps:

1. Acknowledge and hold. The AI confirms it understood the task and explicitly does not begin. This is the most important step. Without it, the AI’s default instinct is to immediately start producing output.

2. Diagnose silently. Before asking anything, the AI assesses where you are: clear and precise? Vague and exploratory? Unaware of your own gaps? This shapes how deep the conversation goes.

3. Choose a posture. You pick how you want the AI to engage — Socratic questioner, coach, mentor, devil’s advocate, sparring partner, or collaborative builder. Combinations work too. This matters more than you’d think: a “coaching” conversation versus one with a “devil’s advocate” surface very different things.

4. One question at a time. The AI asks a single question, waits, and builds the next one on what you revealed. No bundling three questions into one message. If your answers contradict each other, the AI flags it.

5. Alignment checkpoint. When the AI has enough material, it summarizes its understanding of what you need and invites you to correct it. No work begins until you confirm.

6. Execute — once. The AI produces the output. If your thinking evolved during the conversation — and it often does — the output reflects where you landed, not where you started.

Why This Works

Two things happen in a conversation that never happen in a one-shot prompt.

The AI’s assumptions become visible. Instead of silently filling gaps, the AI is forced to surface them as questions. Every question it asks is an assumption it didn’t make — and each one gives you the chance to steer the result before it’s produced.

Your own assumptions become visible — to you. This is the part that surprised me. When the AI asks “who is the audience for this?” or “what should take priority — speed or thoroughness?”, it forces you to articulate things you might have left vague even in your own head. You discover what you actually want by being asked to explain it. The conversation is a mirror.

This is why the approach outperforms prompt engineering even when the prompt engineer is skilled. You can’t specify what you haven’t thought through yet. But you can think it through in conversation.

Try It Yourself

The conversation protocol is free, and I’m offering it in two formats.

The chat prompt works with any AI — Claude, ChatGPT, Gemini, whatever you use. Copy and paste the prompt text into your AI chat, then add your task where it says “[Replace with your task]”. The AI will stop, acknowledge your task, and start asking questions instead of producing output. Once you’ve both explored the context, it executes — once — with everything it learned.

Download the conversation promptThe Claude skill is for those who use Claude specifically. Instead of copy-pasting, you install it once and simply say “turn this into a conversation” before any task. To install it, go to Customize → Skills, click the ”+” button, and upload the skill file. It will then be available to all your chats and projects in Claude.

Download the Claude skillThe Bigger Picture

I’ve spent most of this article on the practical mechanics, and that was intentional. This is a technique you can use today, with any AI, and see the difference on your next task.

But there’s a broader pattern worth naming. We tend to treat AI as a tool to be operated — write the instruction, get the output, evaluate, repeat. That’s a command-and-control model, and it caps the value AI can deliver at whatever the operator can think to ask for.

The people getting the most from AI treat it differently. They treat it as something you think with, not just something you delegate to. A conversation before execution is the simplest expression of that shift. No new tools, no new infrastructure. Just a willingness to talk before you type.

And if that sounds like relationship advice — well, I did warn you at the start.

About the Author

André Boisvert

CIO & Strategic Consultant

CIO and strategic consultant helping organizations navigate AI, digital transformation, and IT strategy. Sharing weekly strategic perspectives on enterprise technology.

LinkedIn